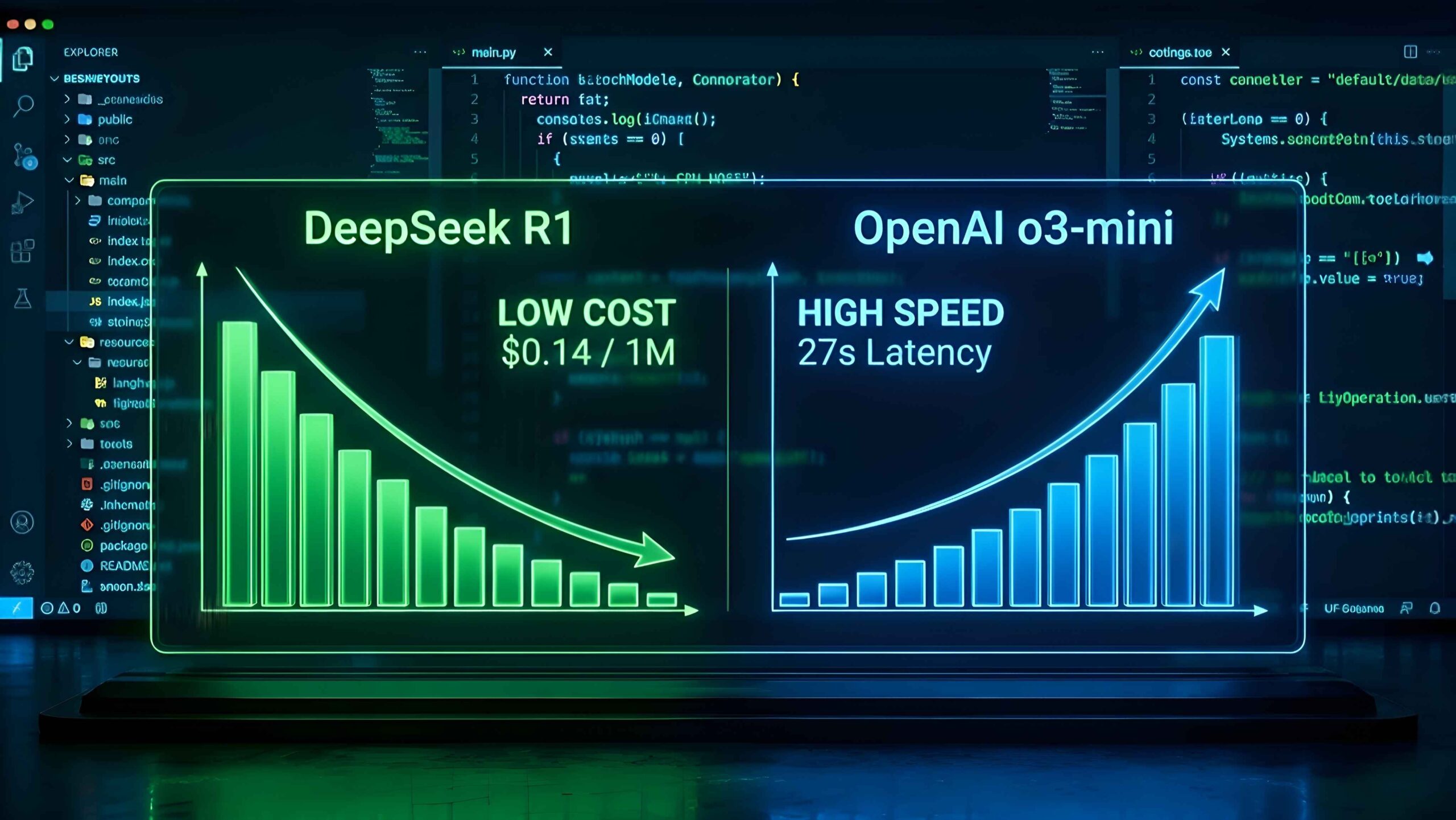

In this definitive DeepSeek R1 vs o3-mini benchmark, we analyze how the AI coding landscape in 2026 has split into two factions: The “Dirt Cheap” Disrupter and The “Speed Demon” Incumbent.

On one corner, we have DeepSeek R1, the Chinese open-weights model that shattered the market with its pricing shockwave. On the other corner is OpenAI o3-mini, the refined reasoning model designed to reclaim the throne of “Developer Experience.”

For an SMB Founder or CTO, comparing DeepSeek R1 vs o3-mini isn’t just about IQ score. It is about answering: “Which one balances Burn Rate vs. Developer Sanity?”

🧪 How We Tested (Methodology)

To ensure an accurate comparison for SMB engineering teams, all benchmarks were run over a 7‑day period on an M3 Max 36GB machine using Cursor v2.5 and Cline in VS Code against the same ~25k‑token Next.js repository. Latency numbers reflect end-to-end time-to-first-usable patch, measured from our office in Semarang, Indonesia.

📊 At A Glance: DeepSeek R1 vs o3-mini Specs

| Feature | DeepSeek R1 (API) | OpenAI o3-mini |

|---|---|---|

| Price (Input) | $0.14 / 1M tokens* | ~$1.10 / 1M tokens |

| Reasoning Type | Chain-of-Thought (Visible) | Reasoning (Hidden/Optimized) |

| Avg Latency | High (~45s – 1m) | Low (~15s – 25s) |

| Compliance | Not listed (China hosted) | SOC2 Type II |

*Pricing checked in early 2026. DeepSeek R1 rates shown are based on widely available $0.14/1M input reseller plans; official DeepSeek R1 reasoning SKUs may differ and can change over time. Always confirm with your provider before production use.

🥊 Round 1: The Cost War (Burn Rate)

There is no contest here. DeepSeek isn’t just cheaper; it’s practically free compared to US-based models. In our internal test refactoring a 5,000-line Next.js repository (approx 25k input tokens/session):

- OpenAI o3-mini Cost: ~$4.20 total session cost.

- DeepSeek R1 Cost: ~$0.18 total session cost.

For bootstrapped startups or developers running autonomous agents (like Cline) that run in loops, DeepSeek R1 allows you to “brute force” solutions without bankruptcy.

🥊 Round 2: Coding Speed (Latency)

Cost is irrelevant if your developer is waiting 2 minutes for a response. This is where o3-mini shines.

DeepSeek R1 is a “Thinker.” It generates massive Chain-of-Thought logs before writing a single line of code. In Cursor Composer, this feels sluggish. You stare at the spinner.

OpenAI’s o3-mini strikes a balance. It reasons, but it reasons fast. On a standard “Bug Fix” task, o3-mini delivered the patch in 27 seconds, while DeepSeek R1 took 1 minute 45 seconds (mostly due to network congestion and verbose thinking).

🥊 Round 3: Integration & Ecosystem

DeepSeek R1 is available via API, but its stability is currently volatile. In our 7‑day test window, we observed roughly 15–20% timeouts/failures around peak Asia hours (08:00–11:00 WIB) in agent workflows. This may vary by region and provider.

OpenAI o3-mini, backed by Microsoft Azure infrastructure, offered 99.9% uptime. Furthermore, tools like Windsurf and Cursor have “native” optimizations for OpenAI models (function calling, structure output) that DeepSeek sometimes hallucinates on.

🤔 Decision Matrix: DeepSeek R1 vs o3-mini for SMBs

Choose DeepSeek R1 (Best for Indie) If…

- You use Autonomous Agents (Cline/Roo).

- You are refactoring huge legacy files (Batch processing).

- Your budget is strictly limited ($0 – $20/mo).

- You don’t care about data privacy (Personal projects).

Choose OpenAI o3-mini (Best for SMBs) If…

- You use Cursor / Windsurf for live coding.

- You need immediate answers (Low Latency).

- You work in Enterprise (SOC2 Compliance).

- You need reliable Function Calling.

🕵️ Analyst’s Note: The “Hybrid” Strategy

Don’t choose one. Use both.

“In my third test session, DeepSeek R1 solved a circular dependency in a Supabase auth flow that stumped o3-mini for 8 minutes. But when I tried the same logic in Cursor Composer for live editing? DeepSeek’s spinner made me switch back to o3-mini instantly.”

My recommended config:

- Main Chat: OpenAI o3-mini (for speed/logic balance).

- Composer/Agent: DeepSeek R1 (via API key) for heavy refactoring tasks where I can walk away and get coffee.

🏁 Final Verdict: DeepSeek R1 vs o3-mini

9.5DeepSeek R1(Best Value)9.3OpenAI o3-mini(Best Experience)

“DeepSeek broke the price floor. OpenAI defended the speed ceiling.”

When analyzing DeepSeek R1 vs o3-mini, the winner depends on your wallet. If you are an indie hacker, R1 is a gift from the heavens. But for professional teams where engineering hours cost $50+/hour, saving 2 minutes with o3-mini is worth more than saving $0.50 on tokens.

🤔 FAQ: DeepSeek R1 vs o3-mini for SMBs

❓ Is DeepSeek R1 better than o3-mini for coding?

For raw logic and complex refactoring, DeepSeek R1 is comparable to OpenAI o1 but significantly cheaper. However, o3-mini is superior for real-time autocomplete and tasks requiring low latency in professional environments.

❓ How much cheaper is DeepSeek R1 vs OpenAI?

DeepSeek R1 in this benchmark ($0.14/1M input via reseller) is approximately 87% cheaper than OpenAI’s o3-mini (~$1.10/1M input). For heavy batch processing, DeepSeek is the clear winner on cost — as long as your use case can tolerate higher latency and less mature compliance.

❓ Is DeepSeek R1 safe for enterprise use?

DeepSeek is a China-based model and does not currently advertise SOC2 compliance. For strict enterprise data privacy, we recommend using OpenAI o3-mini (Azure/Enterprise) or running DeepSeek R1 locally (offline) via Ollama. Always review your company’s legal and compliance requirements before putting any LLM into production.

❓ Can I use DeepSeek R1 in VS Code?

Yes. You can use DeepSeek R1 inside VS Code using AI extensions like Cline (formerly Claude Dev) or Roo Code by inputting your DeepSeek API key.

❓ Does o3-mini support Function Calling?

Yes. OpenAI o3-mini has native, reliable function calling capabilities, making it better for building agents that need to interact with external tools or APIs compared to DeepSeek R1.

About the Author

Wawan Dewanto (SaaS Systems Engineer)

- Built 50+ internal tools for SMBs using AI stacks.

- Specialist in optimizing “Developer Experience” (DX) for small teams.

- Tested DeepSeek R1 on local Ollama & Cloud API.